Calcifer

pendingby Anish S.

AI assistant with RAG, chat, auto-tagging, note organization, and memory for your vault.

🔥 Calcifer

AI-Powered Assistant for Obsidian

Your intelligent AI companion that understands your vault.

RAG-powered chat · Smart auto-tagging · Tool calling · Semantic search · Persistent memory

Features · Installation · Configuration · Commands · Privacy

Features

AI Chat with Vault Context

|

Smart Auto-Tagging

|

Tool Calling

|

Memory System

|

Note Organization

|

Semantic Search

|

Screenshots

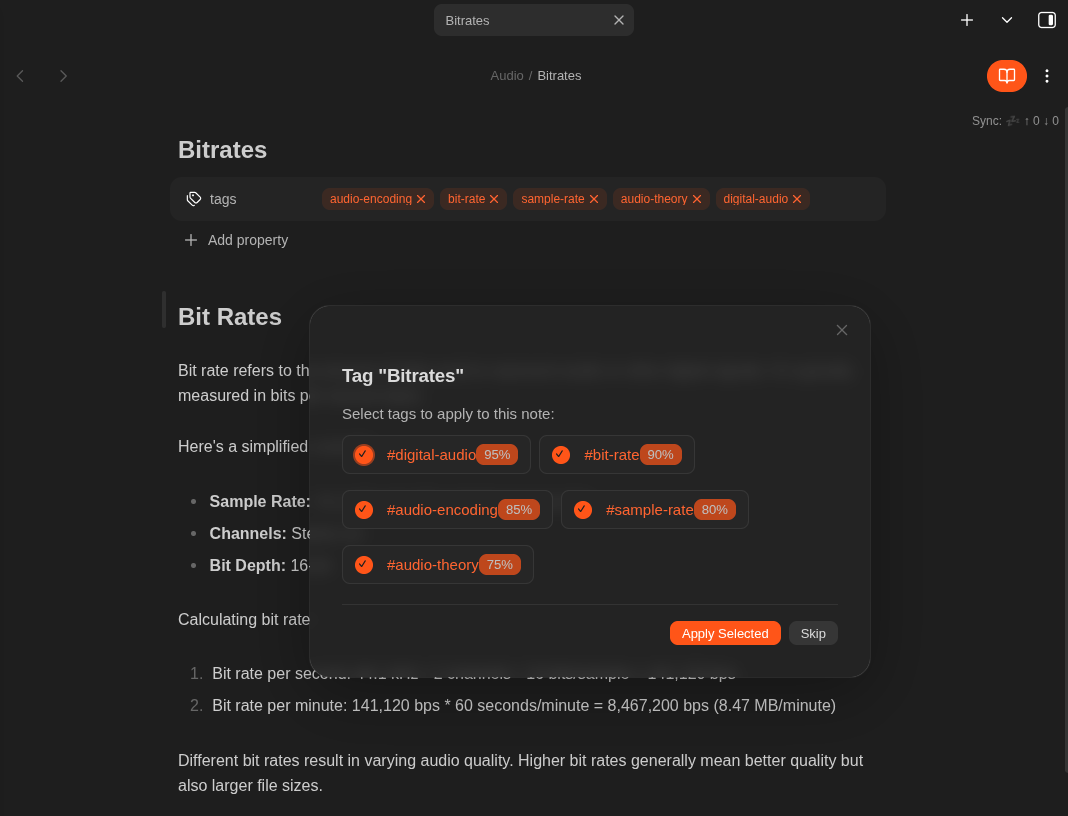

Auto Tag Suggestions

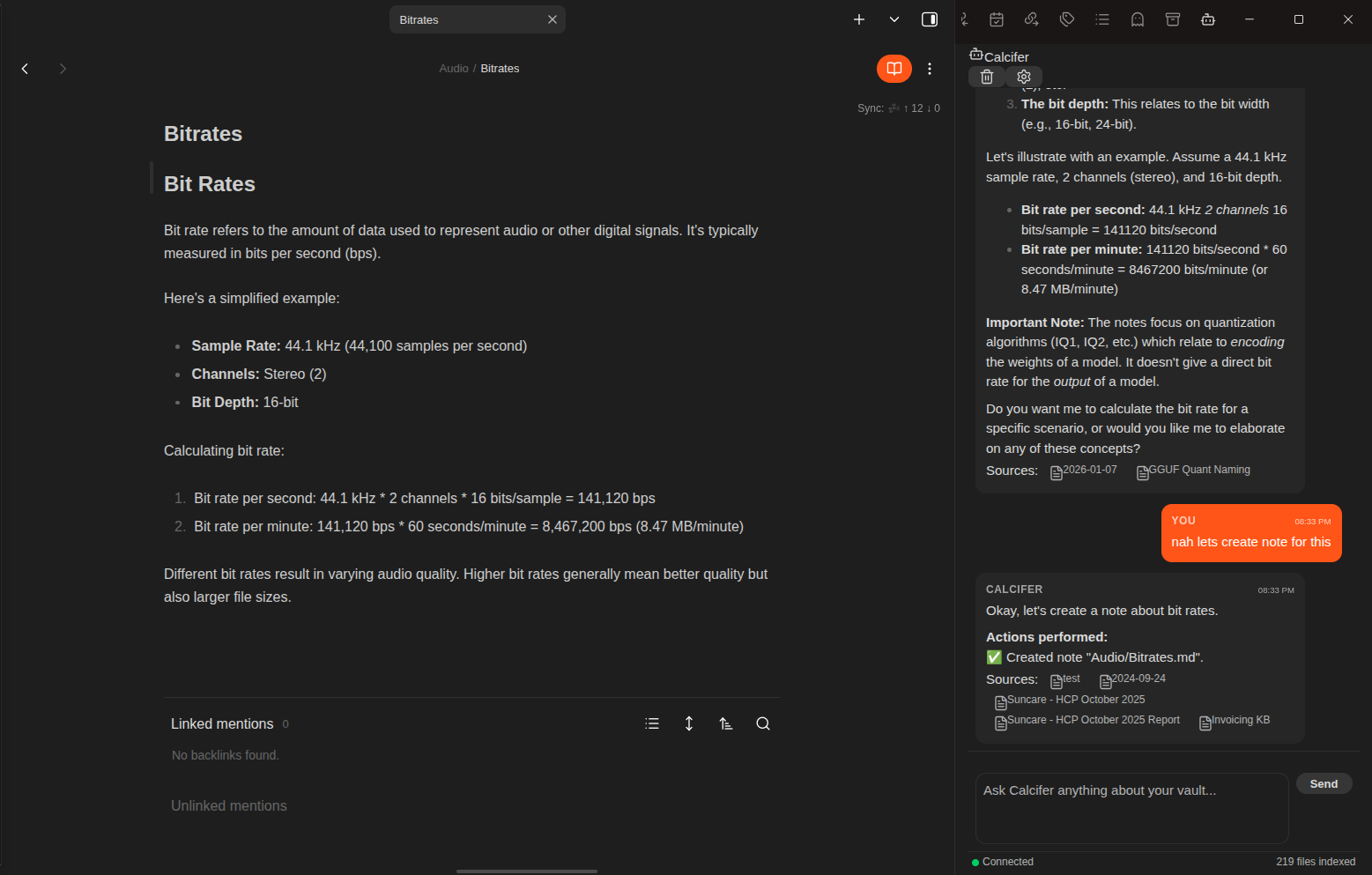

Tool Calling

Requirements

- Obsidian v1.0.0 or higher

- AI API endpoint (one of the following):

| Provider | Description |

|---|---|

| Ollama | Local or remote — complete privacy |

| OpenAI | Or compatible API (Azure OpenAI, etc.) |

Installation

From Community Plugins (Coming Soon)

- Open Settings → Community plugins

- Search for "Calcifer"

- Click Install, then Enable

Manual Installation

# 1. Download from the latest release:

# main.js, manifest.json, styles.css

# 2. Create the plugin folder

mkdir -p <vault>/.obsidian/plugins/calcifer/

# 3. Copy files and enable in Settings → Community plugins

Configuration

1. Add an API Endpoint

- Open Settings → Calcifer

- Click "Add Ollama" or "Add OpenAI"

- Configure the endpoint:

Ollama Configuration

Base URL: http://localhost:11434

Chat Model: llama3.2

Embedding Model: nomic-embed-text

OpenAI Configuration

Base URL: https://api.openai.com

API Key: sk-...

Chat Model: gpt-4o-mini

Embedding Model: text-embedding-3-small

- Click "Test" to verify connection

- Enable the endpoint

2. Index Your Vault

Use command: Calcifer: Re-index Vault

Or enable automatic background indexing in settings.

3. Start Chatting

- Click the bot icon in the left ribbon

- Or use command: Calcifer: Open Chat

Commands

| Command | Description |

|---|---|

Open Chat | Open the chat sidebar |

Re-index Vault | Rebuild the embedding index |

Stop Indexing | Stop the current indexing process |

Clear Embedding Index | Delete all embeddings |

Index Current File | Index only the active file |

Show Status | Display indexing stats and provider health |

Show Memories | Open the memories management modal |

Suggest Tags for Current Note | Get AI tag suggestions |

Suggest Folder for Current Note | Get folder placement suggestions |

Settings Reference

Embedding Settings

| Setting | Default | Description |

|---|---|---|

| Enable Embedding | false | Toggle automatic indexing (enable after configuring provider) |

| Batch Size | 1 | Concurrent embedding requests |

| Chunk Size | 1000 | Characters per text chunk |

| Chunk Overlap | 200 | Overlap between chunks |

| Debounce Delay | 5000 | Milliseconds to wait before indexing changed files |

| Exclude Patterns | templates/** | Glob patterns to skip |

RAG Settings

| Setting | Default | Description |

|---|---|---|

| Top K Results | 5 | Context chunks to retrieve |

| Minimum Score | 0.5 | Similarity threshold (0-1) |

| Include Frontmatter | true | Add metadata to context |

| Max Context Length | 8000 | Total context character limit |

Chat Settings

| Setting | Default | Description |

|---|---|---|

| System Prompt | (built-in) | Customize assistant behavior |

| Include Chat History | true | Send previous messages |

| Max History Messages | 10 | History limit |

| Temperature | 0.7 | Response creativity (0-2) |

| Max Tokens | 2048 | Response length limit |

Tool Calling Settings

| Setting | Default | Description |

|---|---|---|

| Enable Tool Calling | true | Allow AI to perform vault operations |

| Require Confirmation | false | Ask before executing destructive tools |

Memory Settings

| Setting | Default | Description |

|---|---|---|

| Enable Memory | true | Store persistent memories |

| Max Memories | 100 | Storage limit |

| Include in Context | true | Send memories with queries |

Auto-Tagging Settings

| Setting | Default | Description |

|---|---|---|

| Enable Auto-Tag | false | Activate tagging feature (opt-in) |

| Mode | suggest | auto (apply) or suggest (show modal) |

| Max Suggestions | 5 | Tags per note |

| Use Existing Tags | true | Prefer vault tags |

| Confidence Threshold | 0.8 | Auto-apply threshold (0-1) |

Organization Settings

| Setting | Default | Description |

|---|---|---|

| Enable Auto-Organize | true | Activate folder suggestions |

| Mode | suggest | auto (move) or suggest (ask) |

| Confidence Threshold | 0.9 | Auto-move threshold (0-1) |

Performance Settings

| Setting | Default | Description |

|---|---|---|

| Enable on Mobile | true | Run on mobile devices |

| Rate Limit (RPM) | 60 | API requests per minute |

| Request Timeout | 120 | Seconds before timeout |

| Use Native Fetch | false | Use native fetch for internal CAs |

UI Settings

| Setting | Default | Description |

|---|---|---|

| Show Context Sources | true | Display sources in chat responses |

| Show Indexing Progress | true | Show indexing notifications |

Mobile Support

Calcifer is fully functional on mobile devices:

- Chat interface optimized for touch

- Background indexing respects mobile resources

- All features work offline with local Ollama

Privacy & Security

| Aspect | Details |

|---|---|

| Local Processing | All embeddings stored locally in IndexedDB |

| No Cloud Storage | Plugin data never leaves your device |

| API Choice | Use local Ollama for complete privacy |

| Memory Control | View and delete any stored memories |

Network Usage Disclosure

Important: No data is sent to any server until you configure an API endpoint.

| Service | Purpose | Data Sent |

|---|---|---|

| Ollama (local/remote) | Chat completions, embeddings | Note content for context, user messages |

| OpenAI (or compatible) | Chat completions, embeddings | Note content for context, user messages |

- You control which provider to use (local Ollama = no external network)

- Note content is sent as context for AI responses (chunks of ~1000 chars)

- No telemetry or analytics are collected by this plugin

Development

# Clone the repository

git clone https://github.com/anyesh/obsidian-calcifer.git

cd obsidian-calcifer

# Install dependencies

npm install

# Development mode (watch)

npm run dev

# Production build

npm run build

Troubleshooting

"No provider configured"

- Add at least one API endpoint in settings

- Ensure the endpoint is enabled

- Test the connection

"Connection failed"

- Check if Ollama is running (

ollama serve) - Verify the base URL is correct

- Check firewall/network settings

Indexing is slow

- Reduce batch size for limited resources

- Exclude large folders (templates, archives)

- Mobile devices may need smaller chunk sizes

Chat responses are irrelevant

- Ensure vault is indexed (check status bar)

- Lower the minimum score threshold

- Increase Top K for more context

License

MIT License — see LICENSE for details.

Acknowledgments

Made for the Obsidian community

For plugin developers

Search results and similarity scores are powered by semantic analysis of your plugin's README. If your plugin isn't appearing for searches you'd expect, try updating your README to clearly describe your plugin's purpose, features, and use cases.