LM Studio Connect

pendingby Joe Petrakovich

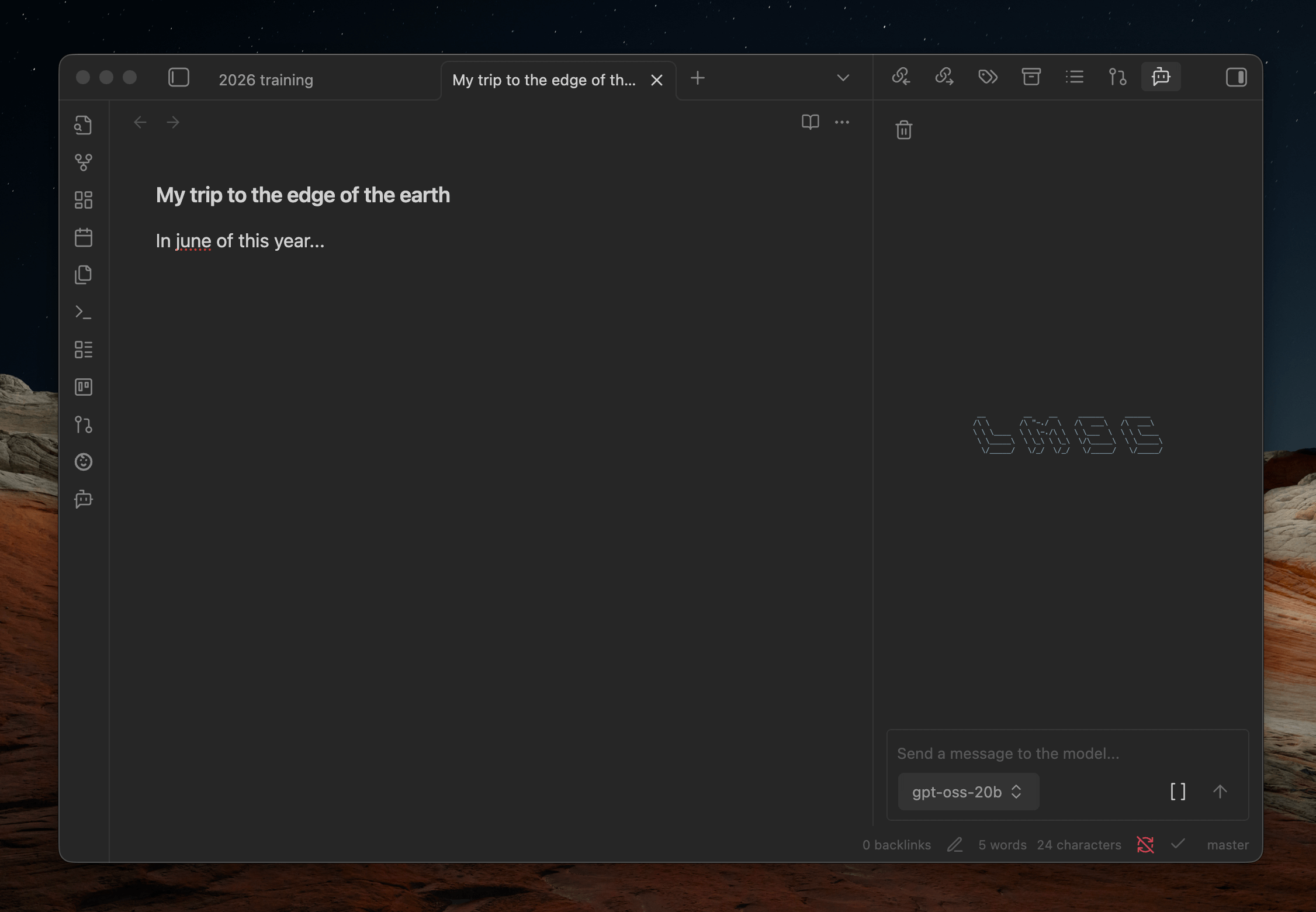

Adds an AI chat interface that connects to LM Studio so you can chat with your notes privately and offline.

__ __ __ ______ ______ /\ \ /\ "-./ \ /\ ___\ /\ ___\ \ \ \____ \ \ \-./\ \ \ \___ \ \ \ \____ \ \_____\ \ \_\ \ \_\ \/\_____\ \ \_____\ \/_____/ \/_/ \/_/ \/_____/ \/_____/

LM Studio Connect

An Obsidian plugin that provides an AI Chat interface to an LM Studio instance. Allows you to use LLMs with your notes privately and offline.

Bugs, Issues, and Feature Requests

How to use

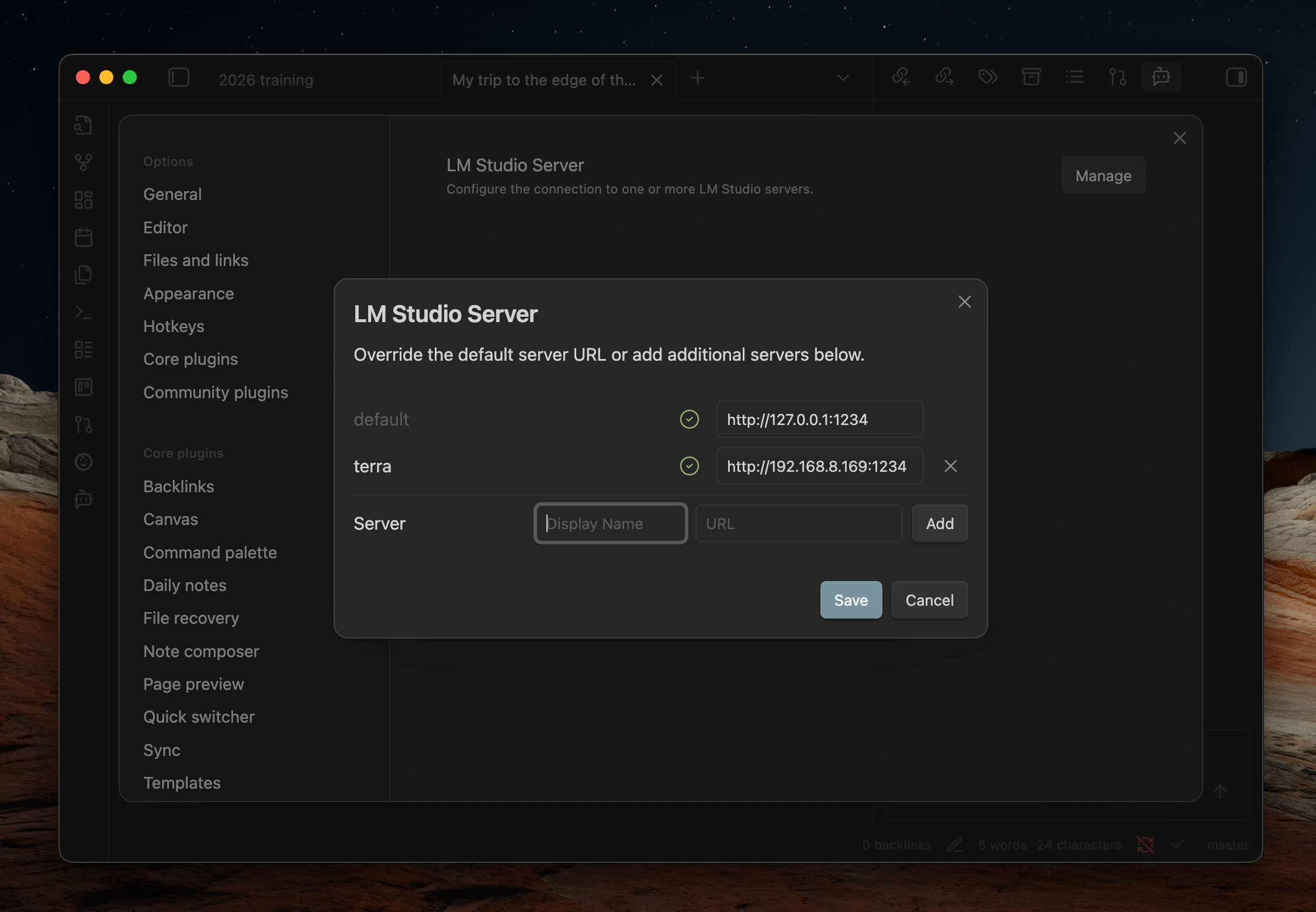

- First, ensure you have LM Studio set up on your machine and that the server is enabled with the CORS option. Note: if you plan on using this plugin on your phone, you may want to enable the "Serve on local network" setting as well, although you may need to change firewall settings.

- Once the plugin is installed, visit the plugin's settings page in Obsidian and verify it can connect to LM Studio.

Chat view

- You can reveal the chat window via the Obsidian command pallete or ribbon menu.

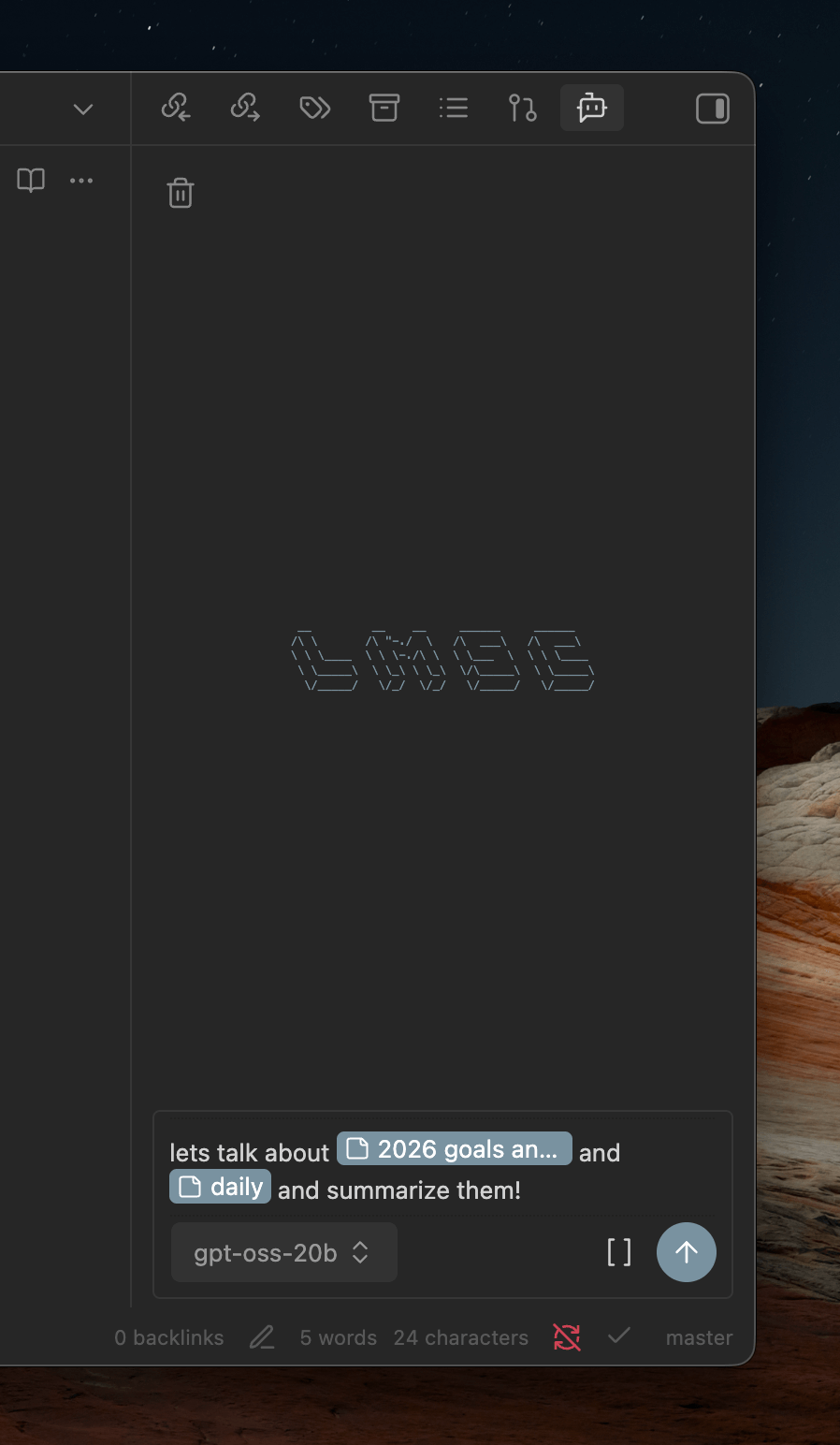

- To chat with notes, begin typing '[[' to open a file picker or use the file picker button at the bottom of the chatbox.

- The LLM is also made aware of your current open notes so you can talk about them without referencing them explicitly.

Codeblock prompts

You can also embed a prompt directly in your notes using a fenced codeblock with the lmsc language identifier:

```lmsc

prompt: What's hot on reddit today?

```

Options:

prompt(required) - The prompt to send to the LLMhideToolUse(optional, default:false) - Whentrue, hides tool call details from the response

Note: Not affiliated with the official LM Studio company, Element Labs, Inc.

For plugin developers

Search results and similarity scores are powered by semantic analysis of your plugin's README. If your plugin isn't appearing for searches you'd expect, try updating your README to clearly describe your plugin's purpose, features, and use cases.